SQL Monitor details for later tuning. 29 March 2012

Posted by David Alejo Marcos in Exadata, Oracle 11.2, RAC, SQL - PL/SQL, Tuning.Tags: Exadata, Oracle 11.2, RAC, SQL, SQL - PL/SQL, Tuning

comments closed

Tuning has always being good fun and something like a challenge for me.

From time to time we are being asked to find out why something did run slow while you are sleeping; answering this question is, in most cases, a challenge.

The problem:

My batch did run slow last night, can you let us know why? Or why did this query run slow? Are questions we, as DBAs, have to answer from time to time.

The solution:

Oracle has provided us with many tools to dig out information about past operations. We have EM, AWR, ASH, dba_hist_* tables, scripts all over internet, etc.

I must admit I do use sql_monitor quite often, but on a really busy environment, Oracle will only keep, with any luck, couple of hours of SQLs.

V$SQL_MONITOR and dbms_sqltune.report_sql_monitor have become tools I use most frequently.

The only problem I have is, as mentioned earlier, the number of SQLs stored on v$sql_monitor or, rather, the length of time being kept there.

Oracle will keep a certain number of SQLs (defined by a hidden parameter) and start recycling them so, by the time I am in the office, any SQL executed during the batch is long gone.

For this reason I came up with the following. I must admit it is not rocket science, but it does help me quite a lot.

It is like my small collection of “Bad Running Queries”. And I only need another DBA or an operator with certain privileges to execute a simple procedure to allow me to try to find out what did happen.

We need the following objects:

1.- A table to store the data:

CREATE TABLE perflog ( asof DATE, userid VARCHAR2(30), sql_id VARCHAR2 (30), monitor_list CLOB, monitor CLOB, monitor_detail CLOB ); /

2.- A procedure to insert the data I need for tuning:

CREATE OR REPLACE PROCEDURE perflog_pr (p_sql_id VARCHAR2 DEFAULT 'N/A')

AS

BEGIN

IF p_sql_id = 'N/A'

THEN

INSERT INTO perflog

SELECT SYSDATE,

sys_context('USERENV', 'SESSION_USER'),

p_sql_id,

sys.DBMS_SQLTUNE.report_sql_monitor_list (TYPE => 'HTML',

report_level => 'ALL'),

NULL,

NULL

FROM DUAL;

ELSE

INSERT INTO perflog

SELECT SYSDATE,

sys_context('USERENV', 'SESSION_USER'),

p_sql_id,

sys.DBMS_SQLTUNE.report_sql_monitor_list (TYPE => 'HTML',

report_level => 'ALL'),

sys.DBMS_SQLTUNE.report_sql_monitor (sql_id => p_sql_id,

TYPE => 'ACTIVE',

report_level => 'ALL'),

sys.DBMS_SQLTUNE.report_sql_detail (sql_id => p_sql_id,

TYPE => 'ACTIVE',

report_level => 'ALL')

FROM DUAL;

END IF;

COMMIT;

END;

/

3.- Grant necessary permissions:

grant select, insert on perflog to public / create public synonym perflog for perflog / grant execute on perflog_pr to public / create public synonym perflog_pr for perflog_pr / grant select any table, select any dictionary to <owner_code> /

The way it works is as follows:

– If business complains regarding a specific query, the DBA or operator can call the procedure with the sql_id:

exec perflog_pr ('1f52b50sq59q');

This will store the datetime, sql_id, DBA/operator name and most important the status of the instance at that time, general view of the sql and a detailed view of the sql running slow.

– If business complains regarding slowness but does not provide a specific query, we execute the following:

exec perflog_pr;

This will store the datetime, sql_id, DBA/operator name and a general view of the instance.

Please, give it a go and let me know any thoughts.

As always, comments are welcome.

David Alejo-Marcos.

David Marcos Consulting Ltd.

Extending Oracle Enterprise Manager (EM) monitoring. 29 January 2012

Posted by David Alejo Marcos in Grid Control, Oracle 11.2, SQL - PL/SQL.Tags: Grid Control, Oracle 11.2, SQL, SQL - PL/SQL

comments closed

I always found Oracle Enterprise Manager (EM) to be an interesting tool for different reasons. The only thing I missed was an easy way to create my own alerts.

It is very simple to create a KSH, Perl, etc script to do some customised monitoring and notify you by email, Nagios, NetCool, etc.

By integrating your scripts with OEM, you will have an easy way to enhance your monitoring and still have notification by email, SNMP traps, etc. as you would currently have if your company is using OEM for monitoring your systems.

The problem:

Develop an easy way to integrate your monitoring scripts with OEM.

The solution:

I decided to use an Oracle type and an Oracle function to accomplish this goal. Using the steps described below, we can monitor pretty much whatever aspect of the database providing you can put the logic into a function.

As example, I had added the steps to create two new User-Defined SQL Metrics, as Oracle calls them:

1.- Long Running Sessions (LRS).

2.- Tablespace Monitoring.

The reason to have my own TBS monitoring is to enhance the existing as it has “hard-coded” thresholds. I might have tablespaces in your database which are 6TBS in size and other with only 2Gb, so raising an alert at 95% for both of them is, in my opinion, not adequate.

You can find more about the query I developed here.

The steps to create a script to monitor long running sessions (LRS) are:

1.- create types

CREATE OR REPLACE TYPE lrs_obj as OBJECT ( user_name VARCHAR2(256), error_message varchar(2000)); / CREATE OR REPLACE TYPE lrs_array AS TABLE OF lrs_obj; /

2.- create function.

CREATE OR REPLACE FUNCTION lrs RETURN lrs_array IS long_running_data lrs_array := lrs_array(); ln_seconds_active number := 300; BEGIN SELECT lrs_obj(username||' Sec: '||sec_running, ', Inst_id: '||inst_id||', SID: '||sid||', Serial: '|| serial||', Logon: '||session_logon_time||', sql_id: '||sql_id) BULK COLLECT INTO long_running_data FROM (SELECT /*+ FIRST_ROWS USE_NL(S,SQ,P) */ s.inst_id inst_id, s.sid sid, s.serial# serial, s.last_call_et sec_running, NVL(s.username, '(oracle)') AS username, to_char(s.logon_time, 'DD-MM-YYYY HH24:MI:SS') session_logon_time, s.machine, NVL(s.osuser, 'n/a') AS osuser, NVL(s.program, 'n/a') AS program, s.event, s.seconds_in_wait, s.sql_id sql_id, sq.sql_text from gv$session s, gv$sqlarea sq where s.sql_id = sq.sql_id and s.inst_id = sq.inst_id and s.status = 'ACTIVE' and s.last_call_et > ln_seconds_active and s.paddr not in ( select paddr from gv$bgprocess where paddr != '00' ) and s.type != 'BACKGROUND' and s.username not in ( 'SYSTEM', 'SYS' ) AND s.event != 'SQL*Net break/reset to client' ) CUSTOMER_QUERY; RETURN long_running_data; END lrs; /

3.- Grant privileges to the users will be executing monitoring scripts:

grant execute on lrs_obj to public; grant execute on lrs_array to public; grant execute on lrs to public;

4.- create synonyms

Create public synonym lrs_obj for lrs_obj; Create public synonym lrs_array for lrs_array; Create public synonym lrs for lrs;

5.- Query to monitor

SELECT user_name, error_message FROM TABLE(CAST(lrs as lrs_array));

Once we are satisfied with the thresholds (300 seconds on the script), we are ready to add it to EM.

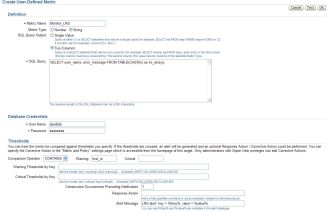

1.- Navigate to User-Defined SQL Metrics (you need to navigate to your database and you will find the link at bottom).

2.- Create new User-Defined SQL Metric and fill the gaps (I have attached some values for reference). The most important thing right now is to make sure metric_Type = String, Sql Query Output = two columns, comparison Operator = CONTAINS and warning has a value returned by the query (i did decide to go for Inst_id).

The only thing left now is to add this monitoring to your templates and to your rules so notifications are being sent.

Once all has been configure, you should start seeing alerts like this:

Target Name=lab01 Target type=Cluster Database Host=mylab Occurred At=Jan 22, 2012 14:35:47 PM GMT Message=LRS alert: key = DAVIDLAB Sec: 530, value = , Inst_id: 1, SID: 153, Serial: 1597, Logon: 22-01-2012 12:21:46, sql_id: 3m72fjep12w8r Metric=StrValue Metric value=, Inst_id: 1, SID: 153, Serial: 1597, Logon: 22-01-2012 12:21:46, sql_id: 3m72fjep12w8r Metric ID=lrs Key=DAVIDLAB Sec: 530 Severity=Warning Acknowledged=No Notification Rule Name=david alerts Notification Rule Owner=DAVIDLAB

For Tablespace monitoring the steps will be the same as described above:

1.- create types

CREATE OR REPLACE TYPE tbs_obj as OBJECT ( tablespace_name VARCHAR2(256), error_message varchar(2000)); / CREATE OR REPLACE TYPE tbs_array AS TABLE OF tbs_obj; /

2.- create function.

CREATE OR REPLACE FUNCTION calc_tbs_free_mb RETURN tbs_array IS

tablespace_data tbs_array := tbs_array();

BEGIN

SELECT tbs_obj(tablespace_name, alert||', Used_MB: '||space_used_mb||', PCT_Free: '||pct_free||', FreeMB: '|| free_space_mb) BULK COLLECT

INTO tablespace_data

FROM

(SELECT (CASE

WHEN free_space_mb <= DECODE (allocation_type, 'UNIFORM', min_extlen, maxextent) * free_extents THEN 'CRITICAL'

WHEN free_space_mb <= DECODE (allocation_type, 'UNIFORM', min_extlen, maxextent) * free_extents + 20 THEN 'WARNING'

ELSE 'N/A'

END)

alert,

tablespace_name,

space_used_mb,

ROUND (free_space/power(1024,3), 2) free_gb,

free_space_mb,

pct_free,

ROUND (extend_bytes/power(1024,3), 2) extend_gb,

free_extents,

max_size_gb,

maxextent

FROM (SELECT c.tablespace_name,

NVL (ROUND ( (a.extend_bytes + b.free_space) / (bytes + a.extend_bytes) * 100,2), 0) pct_free,

NVL ( ROUND ( (a.extend_bytes + b.free_space) / 1024 / 1024, 2), 0) free_space_mb,

(CASE

WHEN NVL ( ROUND ( (maxbytes) / power(1024,3), 2), 0) <= 30 THEN 60

WHEN NVL ( ROUND ( (maxbytes) / power(1024,3), 2), 0) <= 100 THEN 120

WHEN NVL ( ROUND ( (maxbytes) / power(1024,3), 2), 0) <= 300 THEN 200

WHEN NVL ( ROUND ( (maxbytes) / power(1024,3), 2), 0) <= 800 THEN 300

ELSE 340

END) free_extents,

a.extend_bytes, b.free_space,

ROUND (maxbytes /power(1024,3), 2) max_size_gb,

nvl (round(a.bytes - b.free_space ,2) /1024/1024, 0) space_used_mb,

c.allocation_type,

GREATEST (c.min_extlen / 1024 / 1024, 64) min_extlen,

64 maxextent

FROM ( SELECT tablespace_name,

SUM(DECODE (SIGN (maxbytes - BYTES), -1, 0, maxbytes - BYTES)) AS extend_bytes,

SUM (BYTES) AS BYTES,

SUM (maxbytes) maxbytes

FROM DBA_DATA_FILES

GROUP BY tablespace_name) A,

( SELECT tablespace_name,

SUM (BYTES) AS free_space,

MAX (BYTES) largest

FROM DBA_FREE_SPACE

GROUP BY tablespace_name) b,

dba_tablespaces c

WHERE c.contents not in ('UNDO','TEMPORARY') and

b.tablespace_name(+) = c.tablespace_name

AND a.tablespace_name = c.tablespace_name

)

) CUSTOMER_QUERY;

RETURN tablespace_data;

END calc_tbs_free_mb;

/

3.- Grant privileges to the users will be executing monitoring scripts:

grant execute on tbs_obj to public; grant execute on tbs_array to public; grant execute on calc_tbs_free_mb to public;

4.- create synonyms

Create public synonym tbs_obj for tbs_obj; Create public synonym tbs_array for tbs_array; Create public synonym calc_tbs_free_mb for calc_tbs_free_mb;

5.- Query to monitor

SELECT * FROM TABLE(CAST(calc_tbs_free_mb as tbs_array));

Please, remember to use comparison operator = CONTAINS, warning = WARNING and critical=CRITICAL

As always, comments are welcome.

David Alejo-Marcos.

David Marcos Consulting Ltd.

Executing sql on all Exadata nodes. 22 September 2011

Posted by David Alejo Marcos in Exadata, SQL - PL/SQL.Tags: Exadata, SQL - PL/SQL

comments closed

From time to time, I have to run scripts or single commands on all nodes for Exadata. This can take some time.

The problem:

We have a request from our developers to flush the shared pool on all nodes on our UAT Exadata. This is due to a bug we are still experiencing.

The solution:

This is a typical request for my team, were we have to run something on all our nodes. Flushing shared pool can be one of them.

Connecting and executing the same command 8 times, if you have a full rack, can be time-consuming and it is, probably, not the most exciting task to do.

For this reason I came up with the following script. It is something to help me with, so please, test it before using on production.

#!/bin/ksh

###############################

##

## Script to execute sql commands on all Exadata nodes

##

## Owner: David Alejo-Marcos

##

## usage: Exadata_sql_exec.ksh

## i.e.: Exadata_sql_exec.ksh MYTESTDB flush_shared_pool.sql

##

## Example of test.sql:

##

## oracle@hostname1 (MYTESTDB1)$ cat test.sql

## select sysdate from dual;

## select 'this is my test' from dual;

##

## alter system switch logfile;

##

###############################

NO_OF_ARGS=$#

typeset -u DB_SRC=$1

typeset -l DB_SCRIPT1=$2

typeset -l DB_SCRIPT=$2_tmp

if [[ "$NO_OF_ARGS" = "2" ]]; then

echo "Parameters passed: OK"

else

echo "usage: Exadata_sql_exec.ksh"

echo "i.e.: Exadata_sql_exec.ksh MYTESTDB flush_shared_pool.sql"

exit 1

fi

echo "sqlplus -s '/as sysdba'" >/tmp/${DB_SCRIPT}

echo "set linesize 500" >>/tmp/${DB_SCRIPT}

echo "set serveroutput on" >>/tmp/${DB_SCRIPT}

echo "select host_name, instance_name from v\\\$instance;" >>/tmp/${DB_SCRIPT}

echo "@/tmp/${DB_SCRIPT1}" >>/tmp/${DB_SCRIPT}

echo "exit;" >>/tmp/${DB_SCRIPT}

echo "EOF" >>/tmp/${DB_SCRIPT}

# Copy both scripts to all Exadata DB Servers.

dcli -l oracle -g ~/dbs_group -f /tmp/${DB_SCRIPT} -d /tmp

dcli -l oracle -g ~/dbs_group -f ${DB_SCRIPT1} -d /tmp

# Execute command on all Exadata DB

dcli -l oracle -g ~/dbs_group " (chmod 700 /tmp/${DB_SCRIPT} ; /tmp/${DB_SCRIPT} ${DB_SRC} ; rm /tmp/${DB_SCRIPT} ; rm /tmp/${DB_SCRIPT1})"

The sql file to be executed can be anything you would normally run from sqlplus.

Please, bear in mind this script will be executed as many times as nodes you have on your Exadata, so if you create a table of you perform inserts, it will be done 8 times…

Below is an output from a very simple test:

test.sql:

oracle@hostname1 (MYTESTDB1)$ cat test.sql select sysdate from dual; select 'this is my test' "ramdom_text" from dual; alter system switch logfile;

Output:

oracle@hostname1 (MYTESTDB1)$ ./Exadata_sql_exec.ksh MYTESTDB test.sql Parameters passed: OK hostname1: hostname1: Connect to instance MYTESTDB1 on hostname1 hostname1: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname1: hostname1: hostname1: HOST_NAME INSTANCE_NAME hostname1: --------- ---------------- hostname1: hostname1 MYTESTDB1 hostname1: hostname1: hostname1: SYSDATE hostname1: --------- hostname1: 22-SEP-11 hostname1: hostname1: hostname1: ramdom_text hostname1: --------------- hostname1: this is my test hostname1: hostname1: hostname1: System altered. hostname1: hostname2: hostname2: Connect to instance MYTESTDB2 on hostname2 hostname2: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname2: hostname2: hostname2: HOST_NAME INSTANCE_NAME hostname2: --------- ---------------- hostname2: hostname2 MYTESTDB2 hostname2: hostname2: hostname2: SYSDATE hostname2: --------- hostname2: 22-SEP-11 hostname2: hostname2: hostname2: ramdom_text hostname2: --------------- hostname2: this is my test hostname2: hostname2: hostname2: System altered. hostname2: hostname3: hostname3: Connect to instance MYTESTDB3 on hostname3 hostname3: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname3: hostname3: hostname3: HOST_NAME INSTANCE_NAME hostname3: --------- ---------------- hostname3: hostname3 MYTESTDB3 hostname3: hostname3: hostname3: SYSDATE hostname3: --------- hostname3: 22-SEP-11 hostname3: hostname3: hostname3: ramdom_text hostname3: --------------- hostname3: this is my test hostname3: hostname3: hostname3: System altered. hostname3: hostname4: hostname4: Connect to instance MYTESTDB4 on hostname4 hostname4: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname4: hostname4: hostname4: HOST_NAME INSTANCE_NAME hostname4: --------- ---------------- hostname4: hostname4 MYTESTDB4 hostname4: hostname4: hostname4: SYSDATE hostname4: --------- hostname4: 22-SEP-11 hostname4: hostname4: hostname4: ramdom_text hostname4: --------------- hostname4: this is my test hostname4: hostname4: hostname4: System altered. hostname4: hostname5: hostname5: Connect to instance MYTESTDB5 on hostname5 hostname5: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname5: hostname5: hostname5: HOST_NAME INSTANCE_NAME hostname5: --------- ---------------- hostname5: hostname5 MYTESTDB5 hostname5: hostname5: hostname5: SYSDATE hostname5: --------- hostname5: 22-SEP-11 hostname5: hostname5: hostname5: ramdom_text hostname5: --------------- hostname5: this is my test hostname5: hostname5: hostname5: System altered. hostname5: hostname6: hostname6: Connect to instance MYTESTDB6 on hostname6 hostname6: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname6: hostname6: hostname6: HOST_NAME INSTANCE_NAME hostname6: --------- ---------------- hostname6: hostname6 MYTESTDB6 hostname6: hostname6: hostname6: SYSDATE hostname6: --------- hostname6: 22-SEP-11 hostname6: hostname6: hostname6: ramdom_text hostname6: --------------- hostname6: this is my test hostname6: hostname6: hostname6: System altered. hostname6: hostname7: hostname7: Connect to instance MYTESTDB7 on hostname7 hostname7: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname7: hostname7: hostname7: HOST_NAME INSTANCE_NAME hostname7: --------- ---------------- hostname7: hostname7 MYTESTDB7 hostname7: hostname7: hostname7: SYSDATE hostname7: --------- hostname7: 22-SEP-11 hostname7: hostname7: hostname7: ramdom_text hostname7: --------------- hostname7: this is my test hostname7: hostname7: hostname7: System altered. hostname7: hostname8: hostname8: Connect to instance MYTESTDB8 on hostname8 hostname8: ORACLE_HOME is set to /apps/oracle/server/product/11.2/dbhome_1 hostname8: hostname8: hostname8: HOST_NAME INSTANCE_NAME hostname8: --------- ---------------- hostname8: hostname8 MYTESTDB8 hostname8: hostname8: hostname8: SYSDATE hostname8: --------- hostname8: 22-SEP-11 hostname8: hostname8: hostname8: ramdom_text hostname8: --------------- hostname8: this is my test hostname8: hostname8: hostname8: System altered. hostname8:

I have been told many times to do not use quotes on the following command:

sqlplus '/as sysdba'

but:

1.- I am too used to it

2.- you only save 2 keystrokes at the end of the day 🙂

As always, comments are welcome.

David Alejo-Marcos.

David Marcos Consulting Ltd.

How to list files on a directory from Oracle Database. 13 September 2011

Posted by David Alejo Marcos in Exadata, Oracle 11.2, RAC, SQL - PL/SQL.Tags: Exadata, Oracle 11.2, RAC, SQL, SQL - PL/SQL

comments closed

Couple of days ago I had an interesting request, “How can I see the contents of nfs_dir”?

The problem:

We were using DBFS to store our exports. This was the perfect solution as the business could “see” the files on the destination folder, but it did not meet our requirements performance wise on our Exadata.

We have decided to mount NFS and performance did improve, but we had a different problem. NFS is mounted on the database server and business do not have access for security reasons and segregation of duties.

Since then, the export jobs run, but business could not “see” what files were created, so the question was asked.

The solution:

After some research I came across with the following package:

SYS.DBMS_BACKUP_RESTORE.searchFiles

I did have to play a little bit, and I finished with the following script:

1.- Create an Oracle type

create type file_array as table of varchar2(100) /

2.- Create the function as SYS:

CREATE OR REPLACE FUNCTION LIST_FILES (lp_string IN VARCHAR2 default null) RETURN file_array pipelined AS lv_pattern VARCHAR2(1024); lv_ns VARCHAR2(1024); BEGIN SELECT directory_path INTO lv_pattern FROM dba_directories WHERE directory_name = 'NFS_DIR'; SYS.DBMS_BACKUP_RESTORE.SEARCHFILES(lv_pattern, lv_ns); FOR file_list IN (SELECT FNAME_KRBMSFT AS file_name FROM X$KRBMSFT WHERE FNAME_KRBMSFT LIKE '%'|| NVL(lp_string, FNAME_KRBMSFT)||'%' ) LOOP PIPE ROW(file_list.file_name); END LOOP; END; /

3.- Grant necessary permissions:

grant execute on LIST_FILES to public; create public synonym list_files for sys.LIST_FILES;

4.- Test without WHERE clause:

TESTDB> select * from table(list_files); COLUMN_VALUE ---------------------------------------------------------------------------------------------------- /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB.log /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece1.dmp /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece2.dmp /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece3.dmp /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece4.dmp /nfs/oracle/TESTDB/pump_dir/imdp_piece_TESTDB_09092011.log /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece1.dmp_old /nfs/oracle/TESTDB/pump_dir/imdp_piece_TESTDB_12092011.log 8 rows selected. Elapsed: 00:00:00.10

5.- Test with WHERE clause:

TESTDB> select * from table(list_files) where column_value like '%dmp%'; COLUMN_VALUE ---------------------------------------------------------------------------------------------------- /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece1.dmp /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece2.dmp /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece3.dmp /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece4.dmp /nfs/oracle/TESTDB/pump_dir/expdp_TESTDB_piece1.dmp_old Elapsed: 00:00:00.12

As always, comments are welcome.

David Alejo-Marcos.

David Marcos Consulting Ltd.

ORA-16072: a minimum of one standby database destination is required 27 August 2011

Posted by David Alejo Marcos in ASM, Exadata, Oracle 11.2, RAC, RMAN.Tags: ASM, backup, Dataguard, Exadata, Oracle 11.2, RAC, RMAN, Standby

comments closed

This is a quick post regarding the error on the subject. This is the second time it happens to me, so I thought I will write a bit about it.

The problem:

I am refreshing one of my UAT environments (happens to be a Full Rack Exadata) using Oracle RMAN duplicate command. Then the following happens (on both occasions).

1.- Duplicate command fails (lack of space for restoring archivelogs, or any other error). This is can be fixed quite easy.

2.- following error while trying to open the database after restore and recover has finished:

SQL> alter database open; alter database open * ERROR at line 1: ORA-03113: end-of-file on communication channel Process ID: 13710 Session ID: 1250 Serial number: 5 SQL> exit

On the alert.log file we can read the following:

Wed Aug 24 13:32:48 2011 alter database open Wed Aug 24 13:32:49 2011 LGWR: STARTING ARCH PROCESSES Wed Aug 24 13:32:49 2011 ARC0 started with pid=49, OS id=13950 ARC0: Archival started LGWR: STARTING ARCH PROCESSES COMPLETE ARC0: STARTING ARCH PROCESSES <strong>LGWR: Primary database is in MAXIMUM AVAILABILITY mode</strong> <strong>LGWR: Destination LOG_ARCHIVE_DEST_1 is not serviced by LGWR</strong> <strong>LGWR: Minimum of 1 LGWR standby database required</strong> Errors in file /apps/oracle/server/diag/rdbms/xxxx04/xxxx041/trace/xxxx041_lgwr_13465.trc: <strong>ORA-16072: a minimum of one standby database destination is required</strong> Errors in file /apps/oracle/server/diag/rdbms/xxxx04/xxxx041/trace/xxxx041_lgwr_13465.trc: ORA-16072: a minimum of one standby database destination is required LGWR (ospid: 13465): terminating the instance due to error 16072 Wed Aug 24 13:32:50 2011 ARC1 started with pid=48, OS id=13952 Wed Aug 24 13:32:50 2011 System state dump is made for local instance System State dumped to trace file /apps/oracle/server/diag/rdbms/xxxx04/xxxx041/trace/xxxx041_diag_13137.trc Trace dumping is performing id=[cdmp_20110824133250] Instance terminated by LGWR, pid = 13465

The Solution:

Quite simple:

1.- Start up database in mount mode:

SQL> startup mount ORACLE instance started. Total System Global Area 1.7103E+10 bytes Fixed Size 2230472 bytes Variable Size 4731176760 bytes Database Buffers 1.2180E+10 bytes Redo Buffers 189497344 bytes Database mounted. SQL> select open_mode, DATABASE_ROLE, guard_status, SWITCHOVER_STATUS from v$database; OPEN_MODE DATABASE_ROLE GUARD_S SWITCHOVER_STATUS -------------------- ---------------- ------- -------------------- MOUNTED PRIMARY NONE NOT ALLOWED

2.- Execute the following command:

SQL> alter database set standby database to maximize performance; Database altered. SQL> select open_mode, DATABASE_ROLE, guard_status, SWITCHOVER_STATUS from v$database; OPEN_MODE DATABASE_ROLE GUARD_S SWITCHOVER_STATUS -------------------- ---------------- ------- -------------------- MOUNTED PRIMARY NONE NOT ALLOWED

3.- Stop database:

SQL shutdown immediate ORA-01109: database not open Database dismounted. ORACLE instance shut down.

4.- Start up database mount mode:

SQL> startup mount ORACLE instance started. Total System Global Area 1.7103E+10 bytes Fixed Size 2230472 bytes Variable Size 4731176760 bytes Database Buffers 1.2180E+10 bytes Redo Buffers 189497344 bytes Database mounted.

5.- Open database:

SQL> alter database open; Database altered. SQL> select open_mode from v$database; OPEN_MODE -------------------- READ WRITE SQL> select instance_name from v$instance; INSTANCE_NAME ---------------- xxxx041 SQL>

As always, comments are welcome.

David Alejo-Marcos.

David Marcos Consulting Ltd.

How to transfer files from ASM to another ASM or filesystem or DBFS… 23 July 2011

Posted by David Alejo Marcos in ASM, Exadata, Oracle 11.2.Tags: ASM, Exadata, Oracle 11.2

comments closed

I had a requirement of transferring files from our PROD ASM to our UAT ASM as DBFS is proving to be slow.

The problem:

We are currently refreshing UAT schemas using Oracle Datapump to DBFS and then transferring those files to UAT using SCP.

DBFS does not provided us with the performance we need as datapump files are quite big. Same export onto ASM or NFS proves to be much, much faster.

We are currently testing exports to ASM, but, how to move dmp files from PROD ASM to UAT ASM?

The solution:

The answer for us is using DBMS_FILE_TRANSFER. It is very simple to set up (steps below) and it has proved to be fast.

DBMS_FILE_TRANSFER will copy files from one ORACLE DIRECTORY to another ORACLE DIRECTORY. The directory can point to a folder on ASM, DBFS, Filesystem, etc, so the transfer is “heterogeneous”.

I decided to go for GET_FILE, so most of the work will be done on UAT, the other option is PUT_FILE.

The syntax is as follows:

DBMS_FILE_TRANSFER.GET_FILE ( source_directory_object IN VARCHAR2, source_file_name IN VARCHAR2, source_database IN VARCHAR2, destination_directory_object IN VARCHAR2, destination_file_name IN VARCHAR2);

Where:

source_directory_object: The directory object from which the file is copied at the source site. This directory object must exist at the source site.

source_file_name: The name of the file that is copied in the remote file system. This file must exist in the remote file system in the directory associated with the source directory object.

source_database: The name of a database link to the remote database where the file is located.

destination_directory_object: The directory object into which the file is placed at the destination site. This directory object must exist in the local file system.

destination_file_name: The name of the file copied to the local file system. A file with the same name must not exist in the destination directory in the local file system.

These are the steps for my test:

1.- Create directory on destination:

oracle@sssss (+ASM1)$ asmcmd ASMCMD> mkdir +DATA/DPUMP ASMCMD> mkdir +DATA/DPUMP/sid ASMCMD> exit

2.- Create directory on database

SQL> create directory SID_ASM_DPUMP_DIR as '+DATA/DPUMP/sid'; Directory created. SQL> grant read, write, execute on directory SID_ASM_DPUMP_DIR to public; Grant succeeded.

3.- Transfer file

SQL> set timing on time on

17:22:13 SQL> BEGIN

17:22:17 2 dbms_file_transfer.get_file ('SID_ASM_DPUMP_DIR',

17:22:17 3 'expdp.dmp',

17:22:17 4 'TESTSRC',

17:22:17 5 'SID_ASM_DPUMP_DIR',

17:22:17 6 'expdp.dmp');

17:22:17 7 END;

17:22:17 8

17:22:17 9 /

PL/SQL procedure successfully completed.

Elapsed: 00:01:07.57

17:23:31 SQL>

4.- Check files is on destination:

ASMCMD [+DATA/DPUMP/sid] > ls -ls Type Redund Striped Time Sys Name N expdp.dmp => +DATA/sid/DUMPSET/FILE_TRANSFER_0_0.797.756840143

OK, so ls -ls does not work show us the size of the file on the destination directory. The reason is because it is an alias, you need to perform ls -s on the directory where the file is stored.

ASMCMD [+DATA/DPUMP/sid] > ls -ls +DATA/sid/DUMPSET/ Type Redund Striped Time Sys Block_Size Blocks Bytes Space Name DUMPSET MIRROR COARSE JUL 18 17:00:00 Y 4096 1784576 7309623296 14633926656 FILE_TRANSFER_0_0.797.756840143

So, we have managed to transfer 6.8GB of data between two remote servers in 1 minute 8 seconds… not bad.

As always, comments are welcome.

David Alejo-Marcos.

David Marcos Consulting Ltd.

Archive area +RECO has -7440384 free KB remaining (Usable_file_MB is negative) 17 July 2011

Posted by David Alejo Marcos in ASM, Exadata, Oracle 11.2, RMAN.Tags: ASM, Exadata, Oracle 11.2, RMAN

comments closed

I must say, this has been a busy weekend.

We have been promoting a release to production and a guaranteed restore point was created on Friday as rollback strategy. On Sunday I was called as we started to receive alerts.

The problem:

Our monitoring system started to send emails and SNMP Traps with the following alerts:

OEM alert for Automatic Storage Management +ASM4_ssssss4: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Automatic Storage Management +ASM2_ssssss2: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Automatic Storage Management +ASM3_ssssss3: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Automatic Storage Management +ASM7_ssssss7: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Automatic Storage Management +ASM8_ssssss8: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Automatic Storage Management +ASM6_ssssss6: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Automatic Storage Management +ASM1_ssssss1: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Automatic Storage Management +ASM5_ssssss5: Disk group RECO has used 100% of safely usable free space. (Current Disk Group Used % of Safely Usable value: 100) OEM alert for Database Instance : Archive area +RECO has -7440384 free KB remaining. #Current Free Archive Area #KB# value: -7440384# OEM alert for Database Instance : Archive area +RECO has -21725184 free KB remaining. (Current Free Archive Area (KB) value: -21725184)

Not very nice in any situation, but not when you are in the middle of a critical, high visible release.

I did have a look and this is what I found.

The solution:

The first thing I did was to check the Flash Recovery Area, as it is configured to write to our +RECO diskgroup:

NAME USED_MB LIMIT_MB PCT_USED -------------------- ---------- ---------- ---------- +RECO 1630569 2048000 79.62 Elapsed: 00:00:00.12 FILE_TYPE PERCENT_SPACE_USED PERCENT_SPACE_RECLAIMABLE NUMBER_OF_FILES -------------------- ------------------ ------------------------- --------------- CONTROL FILE 0 0 1 REDO LOG 0 0 0 ARCHIVED LOG 0 0 0 BACKUP PIECE 75.66 75.66 86 IMAGE COPY 0 0 0 FLASHBACK LOG 3.96 0 707 FOREIGN ARCHIVED LOG 0 0 0 7 rows selected. Elapsed: 00:00:01.62

Numbers did look ok, some backup files could be reclaimed (Oracle should do it automatically). Lets have a look the ASM:

oracle@ssss (+ASM1)$ asmcmd lsdg State Type Rebal Sector Block AU Total_MB Free_MB Req_mir_free_MB Usable_file_MB Offline_disks Voting_files Name MOUNTED NORMAL N 512 4096 4194304 55050240 26568696 5004567 10782064 0 N DATA/ MOUNTED NORMAL N 512 4096 4194304 35900928 3192844 3263720 -35438 0 N RECO/ MOUNTED NORMAL N 512 4096 4194304 4175360 4061640 379578 1841031 0 N SYSTEMDG/ Elapsed: 00:00:01.62

Bingo, this is where our problem is. USABLE_FILE_MB (+RECO diskgroup) indicates the amount of free space that can be utilized, including the mirroring space, and being able to restore redundancy after a disk failure. A negative number on this column, could be critical in case of disk failure for the system as we might not have enough space perform a restore of all files to the surviving of disk.

Our backups goes to ASM and we copy them to tape afterwards. Our retention policy on disk is between 2 or 3 days, depending of the systems.

When I did check the contents of the backupset on ASM I found some old backups:

Type Redund Striped Time Sys Name Y 2011_07_17/ Y 2011_07_16/ Y 2011_07_15/ Y 2011_07_14/ Y 2011_07_13/ Y 2011_07_12/ Elapsed: 00:00:01.62

To delete those old backups I executed the following script from RMAN:

RMAN> delete backup tag EOD_DLY_110712 device type disk; allocated channel: ORA_DISK_1 channel ORA_DISK_1: SID=1430 instance=<instance> device type=DISK allocated channel: ORA_DISK_2 channel ORA_DISK_2: SID=2138 instance=<instance> device type=DISK allocated channel: ORA_DISK_3 channel ORA_DISK_3: SID=8 instance=<instance> device type=DISK allocated channel: ORA_DISK_4 channel ORA_DISK_4: SID=150 instance=<instance> device type=DISK List of Backup Pieces BP Key BS Key Pc# Cp# Status Device Type Piece Name ------- ------- --- --- ----------- ----------- ---------- 8292 4020 1 1 AVAILABLE DISK +RECO//backupset/2011_07_12/sssss_eod_dly_110712_0.nnnn.nnnnn 8293 4021 1 1 AVAILABLE DISK +RECO//backupset/2011_07_12/sssss_eod_dly_110712_0.nnnn.nnnnn Do you really want to delete the above objects (enter YES or NO)? yes deleted backup piece backup piece handle=+RECO//backupset/2011_07_12/sssss_eod_dly_110712_0.nnnn.nnnnnn RECID=8292 STAMP=nnnn deleted backup piece backup piece handle=+RECO//backupset/2011_07_12/sssss_eod_dly_110712_0.nnnn.nnnnnn RECID=8293 STAMP=nnnn Deleted 2 objects Elapsed: 00:00:01.62

After deleting a two more old backups, the number looked much better:

State Type Rebal Sector Block AU Total_MB Free_MB Req_mir_free_MB Usable_file_MB Offline_disks Voting_files Name MOUNTED NORMAL N 512 4096 4194304 55050240 26568696 5004567 10782064 0 N DATA/ MOUNTED NORMAL N 512 4096 4194304 35900928 3548260 3263720 142270 0 N RECO/ MOUNTED NORMAL N 512 4096 4194304 4175360 4061640 379578 1841031 0 N SYSTEMDG/

Note.- There is another temporary fix. I could changed db_recovery_file_dest to point to +DATA instead of +RECO, but as we have a guaranteed restore point, I thought releasing space from old backups was easier.

As always, comments are welcome.

David Alejo-Marcos.

David Marcos Consulting Ltd.

Exadata Administration – CellCLI 16 July 2011

Posted by David Alejo Marcos in Exadata, Oracle 11.2.comments closed

One of the big differences between Exadata and Non-Exadata systems is the necessity to administer the Exadata Storage Server.

The first time you have to configure the Server side, it has to be done through KVM (Keyboard, Video, Mouse), meaning you will need to be physically near your server. Once the initial configuration steps have been performed, we shall be able to administer the Exadata Storage Servers over the network (i.e. SSH protocol or redirect the KVM console to your desktop using the Sun Integrated Lights Out Management – ILOM – remote client).

Once you are in the server, some tools will be available to us, including Cell Command Line Interface (CellCLI).

CellCLI is only available on the Storage Server. If we need to execute it from outside the storage server (or on more than one storage server), we will need to use the Distributed Command Line Interface (dcli).

CellCLI will allow us to perform administration task like startup, shut down, monitoring activities as well as maintenance.

The syntax to follow is:

[admin command / object command] [options];

Being:

1.- Admin Commands: Administration actions like START, QUIT, HELP, SPOOL.

2.- Object Command: Administration action to be performed on the cell objects like ALTER, CREATE, LIST.

3.- Options: Will allow us to specify additional parameters to the command.

[celladmin@ssssss ~]$ cellcli CellCLI: Release 11.2.2.2.0 - Production on Sat Jul 16 17:28:08 BST 2011 Copyright (c) 2007, 2009, Oracle. All rights reserved. Cell Efficiency Ratio: 17 CellCLI> list cell ssss_admin online CellCLI> exit quitting

If you prefer to execute the command from command-line, we will have to use -e:

[celladmin@ssssss ~]$ cellcli -e list cell ssss_admin online [celladmin@ssssss ~]$

Or

[celladmin@sssss ~]$ cellcli -e help HELP [topic] Available Topics: ALTER ALTER ALERTHISTORY ALTER CELL ALTER CELLDISK ALTER GRIDDISK ALTER IORMPLAN ALTER LUN ALTER PHYSICALDISK ALTER QUARANTINE ALTER THRESHOLD ASSIGN KEY CALIBRATE CREATE CREATE CELL CREATE CELLDISK CREATE FLASHCACHE CREATE GRIDDISK CREATE KEY CREATE QUARANTINE CREATE THRESHOLD DESCRIBE DROP DROP ALERTHISTORY DROP CELL DROP CELLDISK DROP FLASHCACHE DROP GRIDDISK DROP QUARANTINE DROP THRESHOLD EXPORT CELLDISK IMPORT CELLDISK LIST LIST ACTIVEREQUEST LIST ALERTDEFINITION LIST ALERTHISTORY LIST CELL LIST CELLDISK LIST FLASHCACHE LIST FLASHCACHECONTENT LIST GRIDDISK LIST IORMPLAN LIST KEY LIST LUN LIST METRICCURRENT LIST METRICDEFINITION LIST METRICHISTORY LIST PHYSICALDISK LIST QUARANTINE LIST THRESHOLD SET SPOOL START [celladmin@ssssss ~]$

so, what about security?, CellCLI does rely on OS authentication.

There are three predefined users on the Exadata Storage Server:

1.- cellmonitor: This user can perform monitoring tasks.

2.- cellamin: This user can perform most of the administration task such CREATE, ALTER, MODIFY cell objects (cannot perform CALIBRATE).

3.- root: This user has super-user privileges.

I plan my next post to be about dcli and how to minimise work by executing the same CellCLI command on more than one Exadata Storage Server without having to log in on all of them.

As always, comments are welcome.

Smart scan on Exadata and direct path reads. 10 July 2011

Posted by David Alejo Marcos in Uncategorized.comments closed

Couple of days ago I was called to investigate a performance problem on one of our developement databases. People complained of slowness without much indication of what was slow.

Production, DR and UAT are running on a full rack Exadata machine while development runs on a single server.

The problem:

Database is slow. This is what I was given to start with. After monitoring the system I did notice several sessions where performing direct path reads, db file scattered read and some other events.

I am aware of some people having experienced problems with Oracle doing direct path reads instead of db scattered reads so I thought we might be having the same problem.

The solution:

To cut a long story short, direct path reads was not our problem, at least not this time. I managed to find a long running session consuming many of the database resources. The session was running for 7 days, details below:

SQL Text ------------------------------ UPDATE .... Global Information ------------------------------ Status : EXECUTING Duration : 613427s Global Stats ====================================================================================== | Elapsed | Cpu | IO | Concurrency | Buffer | Read | Read | Write | Write | | Time(s) | Time(s) | Waits(s) | Waits(s) | Gets | Reqs | Bytes | Reqs | Bytes | ====================================================================================== | 617320 | 64631 | 552688 | 0.82 | 619M | 52M | 6TB | 47M | 2TB | ====================================================================================== SQL Plan Monitoring Details (Plan Hash Value=2108859552) ============================================================================================================================================================================================ | Id | Operation | Name | Rows | Cost | Time | Start | Execs | Rows | Read | Read | Write | Write | Mem | Temp | Activity | Activity Detail | | | | | (Estim) | | Active(s) | Active | | (Actual) | Reqs | Bytes | Reqs | Bytes | | | (%) | (# samples) | ============================================================================================================================================================================================ | 0 | UPDATE STATEMENT | | | | | | 1 | | | | | | | | | | | 1 | UPDATE | TABLE1 | | | | | 1 | | | | | | | | | | | 2 | FILTER | | | | 613362 | +63 | 1 | 0 | | | | | | | | | | 3 | NESTED LOOPS | | | | 613362 | +63 | 1 | 6905 | | | | | | | | | | 4 | NESTED LOOPS | | 9 | 27146 | 613362 | +63 | 1 | 108K | | | | | | | | | | 5 | SORT UNIQUE | | 839 | 24624 | 613405 | +20 | 1 | 6905 | 38 | 7MB | 1243 | 257MB | 10M | 333M | | | | 6 | TABLE ACCESS FULL | TABLE1 | 839 | 24624 | 44 | +20 | 1 | 5M | 1029 | 499MB | | | | | | | | -> 7 | INDEX RANGE SCAN | INDEX01 | 6 | 2 | 613364 | +63 | 6905 | 108K | 792 | 6MB | | | | | | | | -> 8 | TABLE ACCESS BY INDEX ROWID | TABLE1 | 1 | 6 | 613364 | +63 | 108K | 6905 | 48839 | 382MB | | | | | | | | 9 | FILTER | | | | 613334 | +91 | 6905 | 6904 | | | | | | | | | | 10 | HASH GROUP BY | | 798 | 24625 | 252603 | +65 | 6905 | 4G | 24M | 1TB | 47M | 488GB | 14M | 8M | | | | -> 11 | TABLE ACCESS FULL | TABLE1 | 839 | 24624 | 613364 | +63 | 6905 | 4G | 29M | 416GB | | | | | | | ============================================================================================================================================================================================

impressive, right?

The query was terminated and it is being reviewed on development but, why didn’t we have problems on prod?

It is my believe the reason is called smart scan on the Exadata storage :D.

Lets have a look how the query looks on Prod:

1.- is it enabled?

PROD> show parameter cell_offload_processing NAME TYPE VALUE ----------------------- -------- ------------------------------ cell_offload_processing boolean TRUE

2.- Check the explain plan:

SQL> SELECT * FROM table(DBMS_XPLAN.DISPLAY_CURSOR(sql_id =>'4xxxxxxxj',format =>'ALLSTATS LAST'));

PLAN_TABLE_OUTPUT

----------------------------------------------------------------------------------------------------

----------------------------------------------------------------------------------------

| Id | Operation | Name | E-Rows | OMem | 1Mem | Used-Mem |

----------------------------------------------------------------------------------------

| 0 | UPDATE STATEMENT | | | | | |

| 1 | UPDATE | TABLE1 | | | | |

|* 2 | HASH JOIN ANTI | | 9 | 3957K| 1689K| 4524K (0)|

| 3 | NESTED LOOPS | | | | | |

| 4 | NESTED LOOPS | | 9 | | | |

| 5 | SORT UNIQUE | | 839 | 2037K| 607K| 1810K (0)|

|* 6 | TABLE ACCESS STORAGE FULL| TABLE1 | 839 | | | |

|* 7 | INDEX RANGE SCAN | INDEX01 | 6 | | | |

|* 8 | TABLE ACCESS BY INDEX ROWID| TABLE1 | 1 | | | |

| 9 | VIEW | VW_NSO_1 | 839 | | | |

| 10 | SORT GROUP BY | | 839 | 2250K| 629K| 1999K (0)|

|* 11 | TABLE ACCESS STORAGE FULL | TABLE1 | 839 | | | |

----------------------------------------------------------------------------------------

Predicate Information (identified by operation id):

---------------------------------------------------

2 - access("COL_ID"=TO_NUMBER("COL_ID"))

PLAN_TABLE_OUTPUT

----------------------------------------------------------------------------------------------------

6 - storage(("COL01"=:B2 AND NVL("COL02",'N')='N' AND "COL04"=:B3

AND "COL03"=TO_NUMBER(:B1)))

filter(("COL01"=:B2 AND NVL("COL02",'N')='N' AND "COL04"=:B3

AND "COL03"=TO_NUMBER(:B1)))

7 - access("COL05"="COL05" AND "COL06"="COL06" AND

"COL07"="COL07")

8 - filter((NVL("COL02",'N')='N' AND "COL08"="COL08"))

11 - storage(("COL01"=:B2 AND NVL("COL02",'N')='N' AND "COL04"=:B3

AND "COL03"=TO_NUMBER(:B1)))

filter(("COL01"=:B2 AND NVL("COL02",'N')='N' AND "COL04"=:B3

AND "COL03"=TO_NUMBER(:B1)))

On the plan_table_output, we see storage, indicating smart scan was being used, being the runtime 73 seconds:

PROD> select sql_id, executions, elapsed_time/1000000 from gv$sql 2 where sql_id = '4xxxxxxxj'; SQL_ID EXECUTIONS ELAPSED_TIME/1000000 ------------- ---------- -------------------- '4xxxxxxxj' 1 73.166979

I must say, I am quite impressed with Exadata but, would it make it more difficult to spot bad queries?

As always, comments are welcome.

Check status voting disk. 6 July 2011

Posted by David Alejo Marcos in Exadata, Oracle 11.2, RAC.Tags: Exadata, Oracle 11.2, RAC

comments closed

This is a quick blog as to how to check the status of Voting Disks.

The problem:

You receive a call/email from you 1st line support with something similar to:

---- [cssd(9956)]CRS-1604:CSSD voting file is offline: o/xxx.xxx.xx.xx/SYSTEMDG_CD_05_SSSSSin; details at (:CSSNM00058:) in /apps/oracle/grid_11.2/log/sssss/cssd/ocssd.log. ----

The solution:

Easy to check using crsctl:

oracle$ crsctl query css votedisk ## STATE File Universal Id File Name Disk group -- ----- ----------------- --------- --------- 1. ONLINE a0a559213xxxxxxffa67c2df0fdc12 (o/xxx.xxx.xx.xx/SYSTEMDG_CD_05_SSSSSin) [SYSTEMDG] 2. ONLINE 121231203xxxxxxfc4523c5c34d900 (o/xxx.xxx.xx.xx/SYSTEMDG_CD_05_SSSSSin) [SYSTEMDG] 3. ONLINE a6b3c0281xxxxxxf3f6f9f1fd230ea (o/xxx.xxx.xx.xx/SYSTEMDG_CD_05_SSSSSin) [SYSTEMDG] Located 3 voting disk(s).

As always, comments are welcome.

You must be logged in to post a comment.